The latest release of chat.nvim (v1.4.0) just landed, and it's doing something interesting—turning Neovim into a legitimate AI hub rather than just a fancy prompt box. The plugin now supports multiple LLM providers including OpenAI, Gemini, Anthropic, and Ollama, giving developers real flexibility in how they interface with AI models directly from their editor.

New Features and Provider Support

This release adds the Anthropic protocol and provider, Gemini support with automatic model list generation from the API, and native Ollama integration for those running local models. The :Chat command now includes preview functionality with ctrl-o picker support, plus save/share/load capabilities that make sharing conversations actually useful.

External Platform Integrations

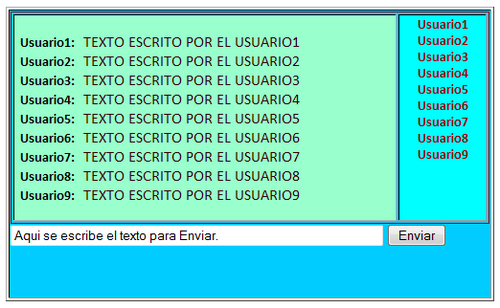

Where things get wild is the integration layer. Version 1.4.0 adds native bridges to DingTalk, Discord, Lark, Telegram, and WeCom—meaning you can actually pull messages from external chat platforms directly into your Neovim workflow. That's a proper hub mentality, not just another terminal-based chatbot.

Bug Fixes and Stability

The changelog shows solid bug-fixing work: proper LSP annotations for local values, session existence checks, error message handling across integrations, and fixes for edge cases like newlines in error messages. The lark integration got particular attention with time-based message filtering and bot-only message handling.

Key Takeaways

- Multi-provider support now spans OpenAI, Gemini, Anthropic, and Ollama

- External platform integrations bridge DingTalk, Discord, Lark, Telegram, and WeCom

- Preview and picker functionality makes :Chat more usable as daily driver

- Save/share/load enables actual conversation persistence and sharing

The Bottom Line

This is the OpenClaw vision executed inside Neovim—where your editor becomes the central nervous system for AI interaction. The integration work is what's exciting here; we're not just talking to bots anymore, we're building a mesh between chat platforms and your development environment. That's the kind of infrastructure that actually moves needles for developers living in terminals.